Popular Tags

No tags found in this context

Community curated code

vLLM is an efficient engine for LLM inference and serving, designed for high throughput and memory management.

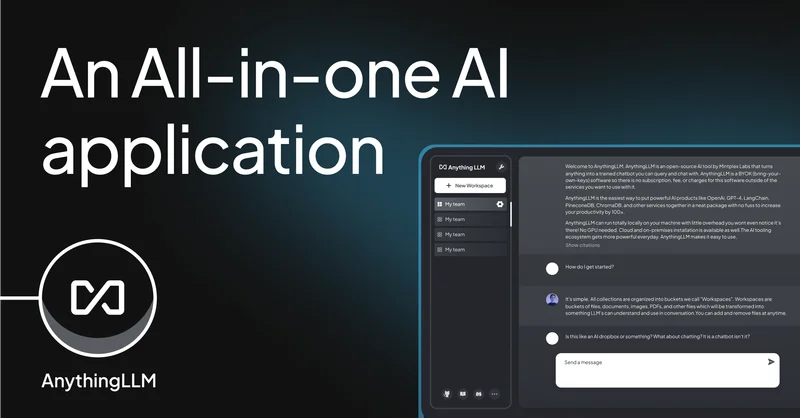

AnythingLLM is a privacy-focused AI application for document interaction and workflow automation, requiring no setup.