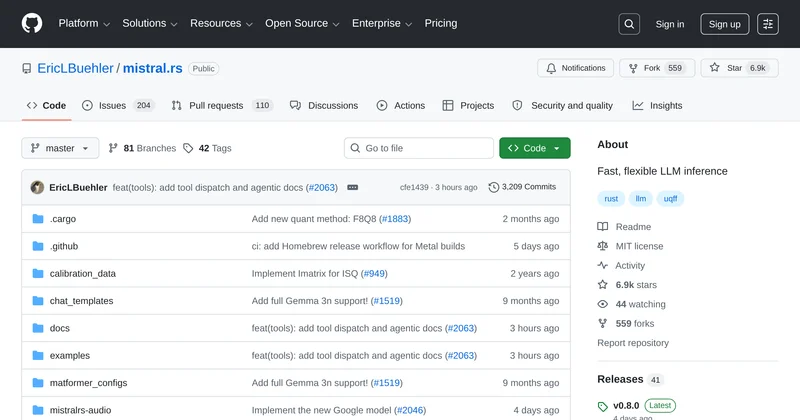

mistral.rs is a fast, flexible tool for LLM inference, supporting Rust and Python SDKs with multimodal capabilities.

flux

Summary

mistral.rs is a flexible and fast inference tool for large language models (LLMs) that supports both Rust and Python SDKs. It enables users to run various models with minimal configuration, making it accessible for developers looking to implement LLM capabilities in their applications.

Key features:

- Multimodal Support - Handles text, image, video, and audio inputs seamlessly.

- Quantization Control - Offers full control over quantization methods, allowing users to optimize performance.

- Built-in Web UI - Provides an instant web interface for interacting with models.

- Hardware-Aware Tuning - Automatically benchmarks and selects optimal settings for various hardware.

- Flexible SDKs - Available in both Python and Rust for easy integration.

Use cases include deploying LLMs for chat applications, image generation, and more, making it a versatile tool for developers in the AI space.

Comments

No comments yet. Sign in to add the first comment!